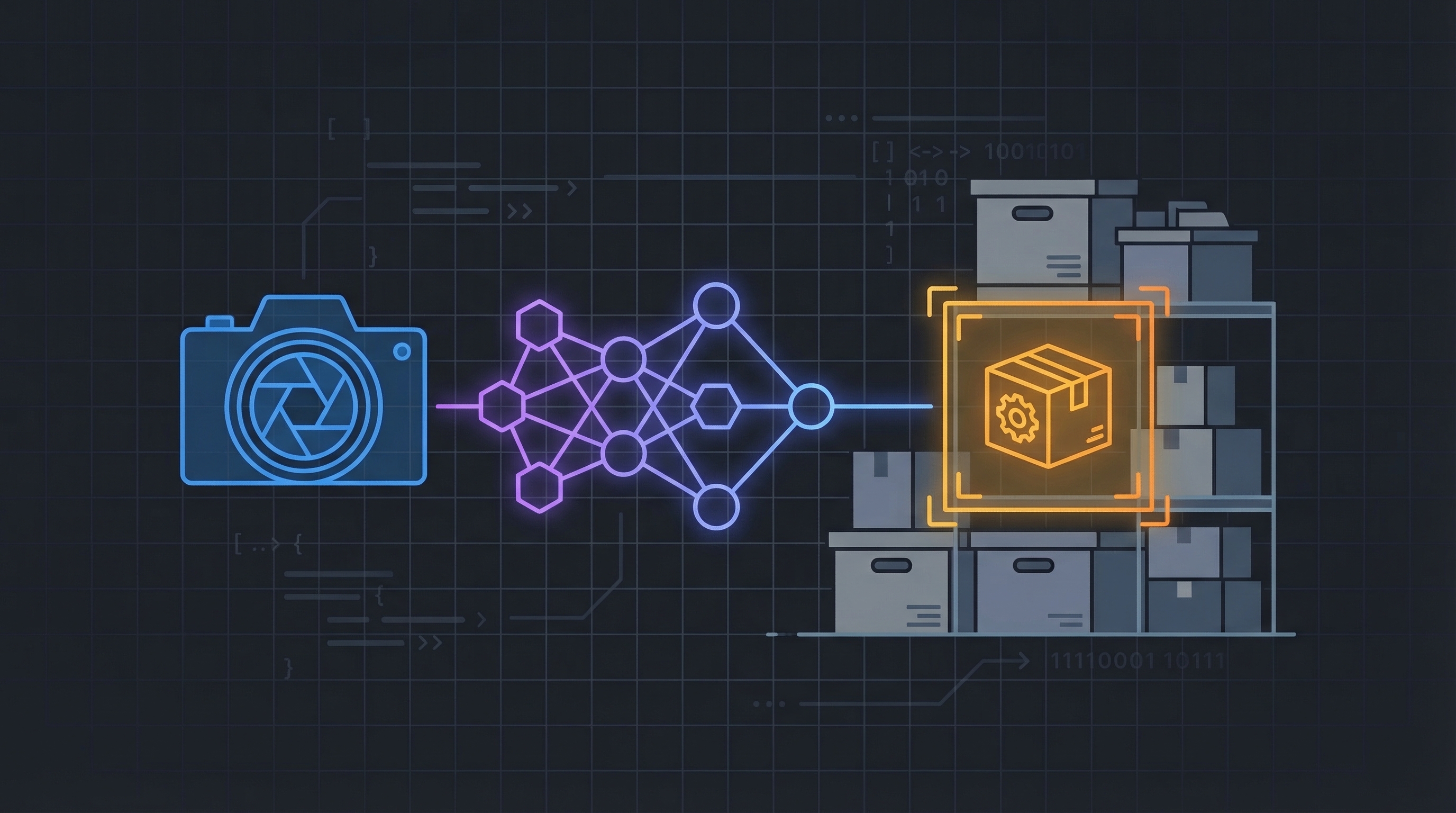

BinBrain uses three AI models working together to identify what’s in your bins: YOLO-World for fast object detection, qwen3-vl for vision-language understanding, and BGE for semantic embedding search. This post covers why each model was chosen, how they evolved during development, and the architecture that lets users teach the system new object classes at runtime — no retraining required.

The Problem: 80 Classes Aren’t Enough

BinBrain started with YOLO11s, a standard object detector trained on the COCO dataset. It’s fast, it’s accurate, and it knows exactly 80 things: person, bicycle, car, bottle, scissors. That’s great for general computer vision benchmarks. It’s terrible for an inventory system where someone stores M3 hex bolts, capacitors, and wire nuts.

The first version worked — detect() would find “scissors” or “bottle” reliably — but users couldn’t add their own vocabulary. If YOLO11s didn’t know about it, it didn’t exist.

The Three-Model Architecture

The solution uses three models at different stages, each chosen for a specific strength:

1. YOLO-World — Fast Detection with Dynamic Classes

YOLO-World is a zero-shot object detector. Unlike traditional YOLO models that are locked to their training classes, YOLO-World accepts a text vocabulary at runtime through CLIP text embeddings. Call model.set_classes(["M3 hex bolt", "Phillips screwdriver", "capacitor"]) and it immediately detects those objects — no fine-tuning, no retraining.

Specifically, BinBrain uses yolov8s-worldv2. The “v2” matters: benchmarking showed better accuracy than v1 at the same inference speed, with the only penalty being a 5.7-second cold start for the CLIP warmup (one-time cost). There is no nano variant — yolov8s-world is the smallest available.

The confidence threshold is set lower than traditional YOLO (0.15 vs 0.25) because zero-shot detection is inherently less confident than supervised detection on known classes. This is exposed as the YOLO_WORLD_CONF environment variable so it can be tuned per deployment.

2. qwen3-vl — Vision-Language Fallback

When YOLO-World returns zero detections (the object isn’t in the class vocabulary yet), BinBrain falls back to qwen3-vl running locally via Ollama. This is a full vision-language model that can describe what it sees in natural language: “M3 hex bolt (fastener, 0.85 confidence)”.

The model went from qwen3-vl:4b to qwen3-vl:2b during development. The 2B variant runs faster on constrained hardware with negligible quality loss for item identification. Images are downscaled to a max of 1280px on the longest side before being sent to Ollama, keeping inference fast and memory pressure manageable.

The iOS client orchestrates the pipeline: call /detect first, and if empty, call /suggest. This keeps the API endpoints single-purpose and avoids coupling the two strategies in server code.

3. BGE — Semantic Embedding Search

BAAI/bge-small-en-v1.5 generates 384-dimensional embeddings for semantic search. When a user searches “small screwdriver,” they find items cataloged as “Phillips #0 screwdriver” because the embeddings capture semantic similarity rather than requiring exact text matches.

Embeddings are stored in PostgreSQL via pgvector with an HNSW cosine index. The API returns a cosine similarity score (1.0 – distance) so clients can display percentage matches. An earlier bug in the iOS app was computing a different normalization — dividing raw pgvector distance by 2 instead of using the server’s pre-computed score — which inflated displayed similarities by up to 25 percentage points.

YOLO-World Dynamic Detection: The Bootstrap Loop

The key insight is that the class vocabulary grows through normal use. Here’s the loop:

- User takes a photo of bin contents

- YOLO-World checks against known classes — finds “scissors” (known), done

- YOLO-World finds nothing → fall back to qwen3-vl

- qwen3-vl identifies “M3 hex bolt” → iOS shows confirm/reject UI

- User confirms →

POST /classes/confirm→ persisted to Postgres, CLIP re-encodes the updated vocabulary in a background thread - Next photo with hex bolts → YOLO-World detects them instantly

The ClassRegistry service manages this lifecycle. It holds an in-memory copy of the class list (thread-safe with locks), persists to a confirmed_classes table in Postgres, and fires a reload callback that triggers model.set_classes() in a background thread. The detection service wraps model access with a threading.RLock so inference never runs against a half-reloaded vocabulary.

CLIP: The Hidden Dependency

YOLO-World needs CLIP’s ViT-B/32 text encoder to convert class names into embeddings for set_classes(). This adds approximately 1GB of RAM regardless of the YOLO model size or class count.

The critical discovery during development: there are three incompatible CLIP packages. OpenAI’s clip, the open_clip_torch community fork, and Ultralytics’ own fork at github.com/ultralytics/CLIP.git. Only the Ultralytics fork works with the ultralytics YOLO-World integration. This cost hours of debugging before a pre-mortem analysis flagged the dependency ambiguity.

The Docker build also required adding git to the builder stage — pip needs it to clone the CLIP fork, and python:3.12-slim doesn’t include it.

Benchmarking and Scaling

After the CLIP warmup (5.7 seconds on first call), set_classes() performance scales linearly with vocabulary size:

| Classes | set_classes() Time |

|---|---|

| 50 | ~0.6s |

| 100 | ~1.1s |

| 200 | ~2.2s |

For a single-user system running on a Mac, blocking detection requests for 1-2 seconds during a class reload is acceptable. The code logs timing around every set_classes() call so regressions are immediately visible. For future multi-user or Raspberry Pi deployments, a double-buffering approach (load new classes on a spare model instance, then swap the pointer) would eliminate the blocking entirely.

The Full Stack

| Layer | Technology | Purpose |

|---|---|---|

| Detection | YOLO-World (yolov8s-worldv2) | Fast object detection with dynamic vocabulary |

| Vision LLM | qwen3-vl:2b via Ollama | Natural language item identification (fallback) |

| Embeddings | BGE-small-en-v1.5 via fastembed | Semantic search across cataloged items |

| Text Encoder | CLIP ViT-B/32 (Ultralytics fork) | Convert class names to detection embeddings |

| Vector DB | PostgreSQL + pgvector (HNSW cosine) | Similarity search and class persistence |

| API | FastAPI + uvicorn | REST endpoints with API key auth |

| Containers | Docker Compose (CPU-only PyTorch) | Reproducible deployment |

Everything runs locally — no cloud inference, no API keys to external AI services. The Docker image uses CPU-only PyTorch to save ~600MB versus the default CUDA bundle, with Apple MPS acceleration available in local development.

What’s Next

YOLOE (a newer variant with native text prompts and +3.5 AP over YOLO-World at 1.4x faster inference) is on the evaluation list. The current architecture — where the class vocabulary lives in Postgres and the detection model is loaded behind an abstraction — means swapping the underlying model requires changing one function, not redesigning the system.

Related: BinBrain: Building a Local AI Vision Inventory System with Ollama and FastAPI

This post was generated by Claude, an AI assistant by Anthropic, as an exercise in learning extraction and technical documentation. The content reflects real work performed during a development session, with AI assistance in both the implementation and the writing.