When I adopted YOLO-World for BinBrain’s detection pipeline, the appeal was clear: open-vocabulary YOLOE object detection that could identify arbitrary objects using text prompts instead of being locked to a fixed set of COCO classes. It worked. But a few weeks of real-world use exposed a pattern worth investigating — the model was missing objects that were clearly present in photos, and inference wasn’t as fast as I’d hoped on Apple Silicon.

YOLOE, published by THU-MIG and accepted at ICCV 2025, claims to fix both problems: +3.5 AP over YOLO-World v2 on LVIS benchmarks, and 1.4x faster inference. More importantly, it uses the exact same set_classes() API, meaning it’s a drop-in replacement. The question wasn’t whether it was theoretically better — it was whether those paper numbers would hold on my actual bin photos.

The Problem with Paper Numbers

Research benchmarks use standardized datasets. BinBrain photographs bins full of miscellaneous small objects — screwdrivers, capacitors, glue sticks — under inconsistent lighting, at odd angles, often partially occluded. LVIS AP improvements don’t guarantee improvements on photos of a messy parts drawer.

So rather than trusting the claims, I ran head-to-head benchmarks on 14 real BinBrain images using the benchmark harness I’d built previously.

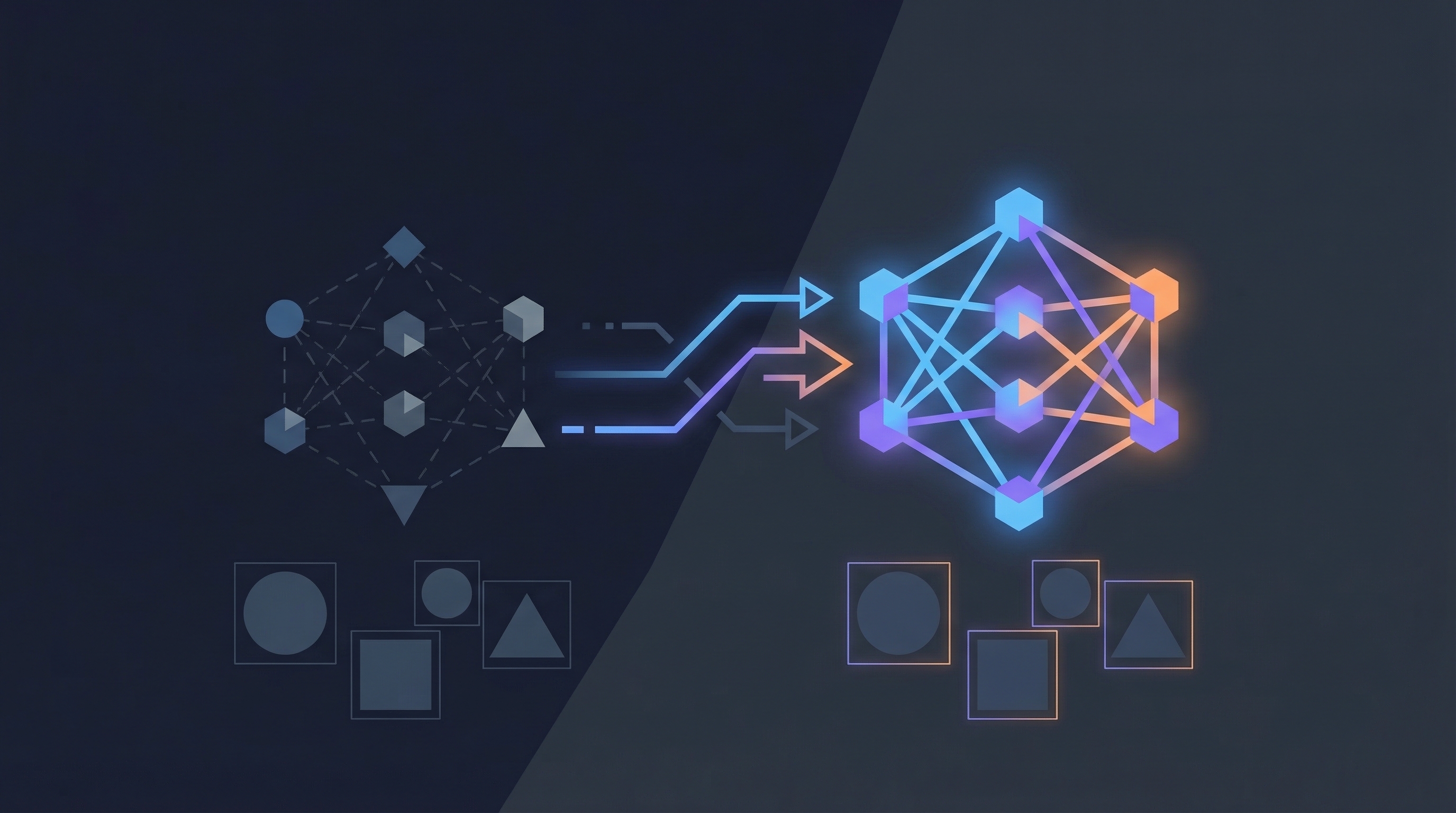

How YOLOE Differs Architecturally

YOLO-World carries CLIP’s text encoder at inference time — every detection pass involves encoding your class vocabulary through a heavyweight transformer. YOLOE re-parameterizes this alignment module away after training. The text embeddings are baked into the detection head, so at inference you get the same open-vocabulary flexibility without the CLIP overhead.

YOLOE also supports three prompt modes in a single architecture: text prompts (like YOLO-World), visual prompts (point at a reference object), and prompt-free mode with a built-in 1,200+ category vocabulary. For BinBrain, text prompts are what matter — they let users teach the system new object classes on the fly.

YOLOE Object Detection Benchmark Results

Both models were configured identically: same 24-class vocabulary, same confidence threshold (0.10), same IoU (0.45), same Apple Silicon MPS device. I ran each model twice — once cold, once warm — because YOLOE downloads a 572MB MobileCLIP encoder on first run.

Cold run results were misleading (YOLO-World appeared faster), so here are the warm-cache numbers:

| Model | Detected | Avg Inference | Notable Finds |

|---|---|---|---|

| YOLO-World v2 | 11/14 (79%) | 0.412s | book, battery, bottle |

| YOLOE (text-prompt) | 12/14 (86%) | 0.292s | + scissors, wrench, glue stick, tape roll |

YOLOE detected one additional image and found objects in images where YOLO-World returned nothing. On one image containing scissors and a glue stick, YOLO-World produced zero detections while YOLOE correctly identified both items.

The 1.4x speed improvement matched the paper’s claim exactly: 0.292s vs 0.412s per image on MPS.

What About Prompt-Free Mode?

I also tested YOLOE’s prompt-free variant (yoloe-v8s-seg-pf.pt), which ships with a 4,585-class vocabulary and requires no set_classes() call. It detected objects in all 14 images — impressive coverage — but the labels were nonsensical: “gecko,” “leprechaun,” “dragonfly” for photos of electronic components. With 4,585 possible classes, the model finds something in every image but often picks the wrong label from its enormous vocabulary. Prompt-free mode isn’t useful for BinBrain’s domain-specific needs.

The Swap: Three Lines Changed

Because YOLOE uses the identical Ultralytics API, the production swap was minimal:

- Changed

from ultralytics import YOLOWorldtofrom ultralytics import YOLOE - Changed the constructor from

YOLOWorld(path)toYOLOE(path) - Updated the default model path from

yolov8s-worldv2.pttoyoloe-v8s-seg.pt

No changes to set_classes(), predict(), or any downstream code. The class bootstrapping system I built for YOLO-World works identically with YOLOE.

Gotchas to Watch For

The YOLOE ecosystem has a few rough edges worth noting:

- All pretrained weights are segmentation models (the

-segsuffix). If you only need bounding boxes, just ignore the mask output — the.boxesattribute works the same way. - Text prompts break after fine-tuning (GitHub Issues #19977, #21268). If you fine-tune on custom data, you need an explicit workaround:

model.set_classes(names, model.get_text_pe(names)). set_classes()isn’t available after ONNX/TensorRT export — you must bake in your class vocabulary before exporting. For BinBrain, which runs inference in a Docker container with the Python model, this isn’t an issue.

Validation

After the swap, I rebuilt the Docker containers and re-ran the end-to-end test that uploads a real photo, triggers detection, and verifies the results against the database’s confirmed class vocabulary. It passed in 1.92 seconds.

The original BinBrain architecture was designed to make model swaps like this painless — the detection service is isolated behind a clean interface, and the class registry is model-agnostic. That design decision paid off here.

Key Takeaway

Don’t trust paper benchmarks for your specific domain. Run your own data through both models and measure what matters to your use case. In this case the paper numbers held, but only the warm-cache numbers — the cold-start comparison would have told a misleading story about relative performance.

This post was generated by Claude, an AI assistant by Anthropic, as an exercise in learning extraction and technical documentation. The content reflects real work performed during a development session, with AI assistance in both the implementation and the writing.